Products suited for your business

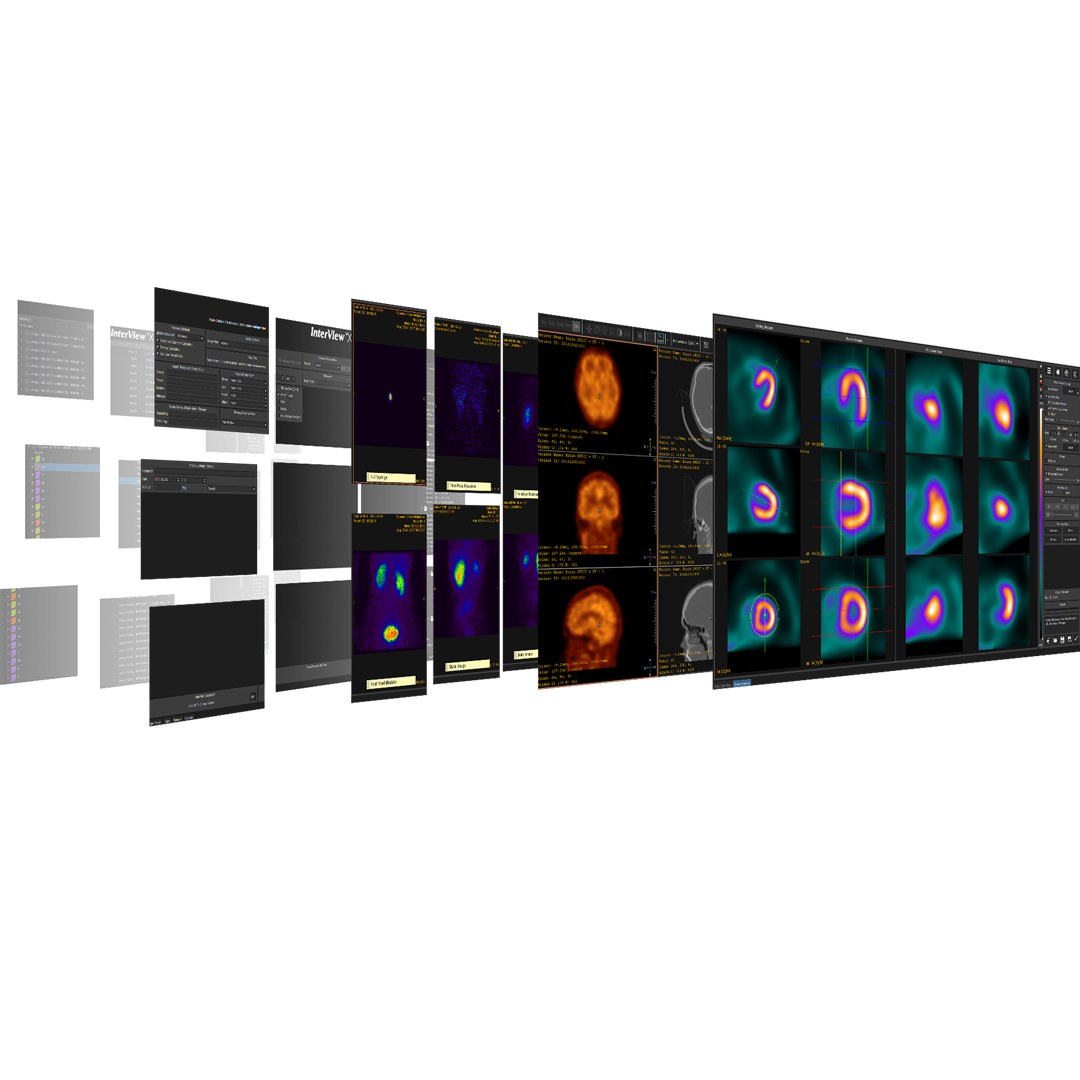

Preclinical products

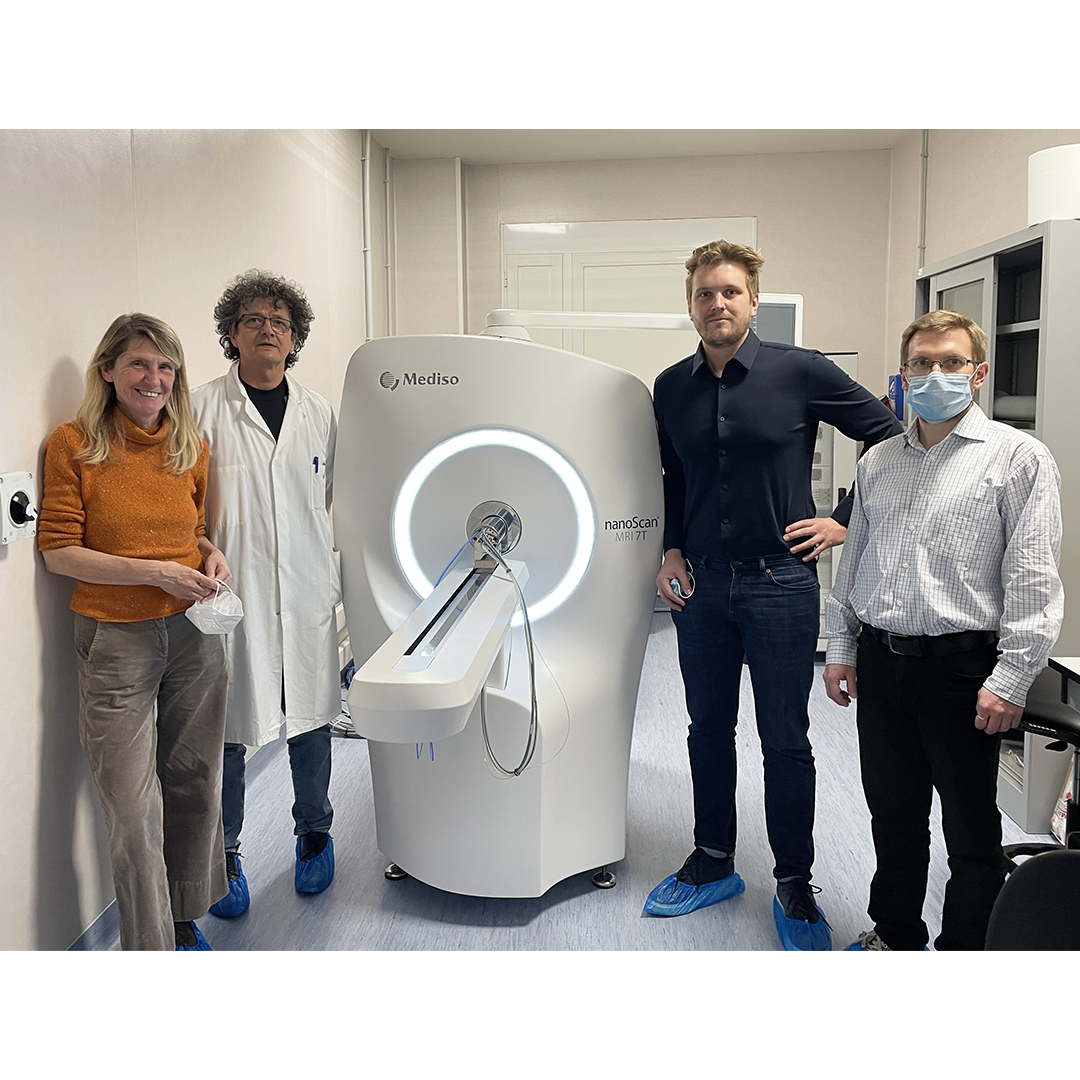

The equipment of Mediso nanoScan® Family deliver the current and future promise of molecular imaging by maximizing functional information in combination with precise anatomical detail.

Latest news & events

About us

Since 1990, Mediso is creating new technologies, and molecular imaging systems.

Clinical and pre-clinical solutions for hospitals, clinics and scientific institution

How can we help you?

Don't hesitate to contact us for technical information or to find out more about our products and services.

Get in touch